AI compliance in the United States: from state laws to defense contracts and automotive safety

Updated February 2026

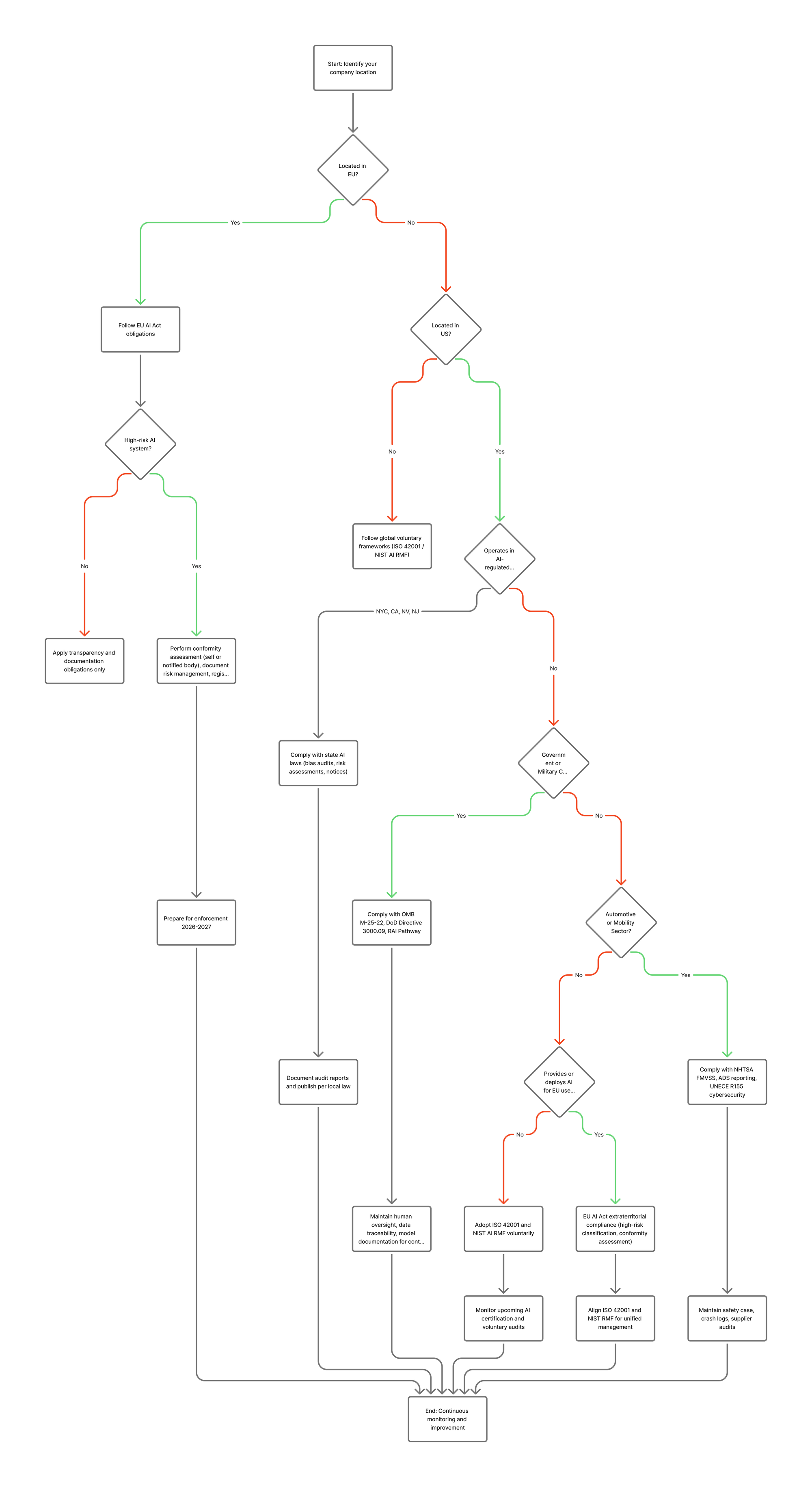

The downloadable PDF and the decision tree chart are at the end of this article

The United States doesn't yet have an EU-style Artificial Intelligence Act, but that doesn't mean AI is unregulated. Across states, federal agencies, and key industries like defense and automotive, a growing patchwork of enforceable rules already governs how AI may be developed, tested, procured, and deployed.

While many of these rules are sector-specific and contract-driven rather than certification-based, together they form the first generation of US AI oversight — and the federal government is now actively trying to shape (and in some cases challenge) what states are doing.

1. The first layer: state and local AI laws

Although federal AI legislation is still under discussion, several states and municipalities have enacted binding requirements — particularly where AI directly impacts employment, privacy, or consumer rights.

| Jurisdiction | Regulation | Key obligations | Enforcement status |

|---|---|---|---|

| New York City (Local Law 144) | Effective July 5, 2023 | Employers using "automated employment decision tools" (AEDTs) must commission an annual independent bias audit, publicly post the results, and give notice to applicants. | Active but under-enforced. A December 2025 State Comptroller audit found significant enforcement gaps — DCWP has committed to overhauling its approach. NY State Bill S4394A proposes expanding AEDT regulation statewide. |

| California (ADMT under CCPA) | Regulations effective January 1, 2026; ADMT compliance required by January 1, 2027 | Risk assessment, consumer notification, opt-out mechanisms, and pre-use notices required for automated decision-making technologies used in high-impact areas such as finance, hiring, or health. | Finalized September 2025. Businesses already using ADMT must fully comply by January 1, 2027. New ADMT deployments after that date must be compliant from day one. CCPA penalties apply — up to $2,500 per violation and $7,500 per intentional violation. |

| Nevada (Assembly Bill 406) | Signed June 5, 2025; effective July 1, 2025 | Prohibits AI systems from providing or claiming to provide mental or behavioral healthcare. Licensed providers may use AI only for administrative tasks (scheduling, billing, notes) with independent review of outputs. Also restricts AI in public school mental health services. | Active enforcement by state health regulators. Civil penalties up to $15,000 per incident for AI providers; professional disciplinary action for licensed providers who violate. |

| Colorado (AI Act, SB 24-205) | Signed into law 2024; enforcement begins February 1, 2026 | Requires developers and deployers of "high-risk AI systems" to use reasonable care to avoid algorithmic discrimination. Deployers must conduct impact assessments, provide consumer notices, and maintain documentation. | Under active federal scrutiny. The Trump administration's December 2025 executive order specifically cited Colorado's AI Act as an example of a law that "may force AI models to produce false results." The law is a primary target for the new AI Litigation Task Force. |

| New Jersey (AG Guidance, January 2025) | Effective January 2025 | NJ Attorney General guidance confirms that existing Law Against Discrimination (LAD) applies to AI-driven employment decisions. Employers can violate the LAD even without discriminatory intent if automated tools produce disparate impact. | Active — enforced through existing anti-discrimination law. Earlier bills (S2964/A3855) requiring independent bias audits of automated hiring tools expired in the 2024-2025 session without passage. |

What this means in practice

Companies using AI for employment screening, financial decisions, or healthcare applications in these jurisdictions already have enforceable duties:

- keep auditable documentation,

- perform bias testing (usually via an independent third party), and

- publish audit results or provide them to regulators on request.

This is the first taste of mandatory AI audits in the United States — even before a federal framework exists.

And here's the twist: the federal government is simultaneously pushing to limit what states can do. On December 11, 2025, President Trump signed an executive order titled "Ensuring a National Policy Framework for Artificial Intelligence," which establishes an AI Litigation Task Force to challenge state AI laws in court and directs the Commerce Department to identify "onerous" state regulations.

However — and this is critical for anyone doing compliance planning — until courts actually strike down a state law or Congress passes preemptive legislation, every state law on the books remains fully enforceable. Companies that stop complying based on a federal executive order's aspirations rather than its legal effect would be making a very expensive gamble.

2. Federal obligations: executive guidance and agency frameworks

At the national level, AI oversight is driven by executive orders and agency-specific memoranda rather than a single law. The federal approach shifted significantly in January 2025 when the new administration took office.

Executive Order 14179 (January 23, 2025) — "Removing barriers to American leadership in artificial intelligence"

This replaced the Biden-era Executive Order 14110 (October 2023), which was revoked on the president's first day in office. Where EO 14110 emphasized safety testing, red-teaming, and transparency requirements, the new order focuses on removing regulatory barriers and promoting innovation. It directed federal agencies to review all prior AI policies and suspend or rescind any that conflict with the new pro-innovation approach.

Executive Order 14365 (December 11, 2025) — "Ensuring a national policy framework for artificial intelligence"

This order goes further, establishing:

- An AI Litigation Task Force (DOJ) to challenge state AI laws in court

- A Commerce Department evaluation of state AI laws deemed "onerous"

- Restrictions on federal funding (including BEAD broadband grants) to states with targeted AI regulations

- A directive to prepare legislation for a federal AI framework that would preempt conflicting state laws

- Carve-outs protecting state laws related to child safety, AI data center infrastructure, and state government procurement of AI

OMB M-25-21 and M-25-22 (April 2025)

These two memos replaced the Biden-era OMB M-24-10 and M-24-18, but preserved much of the underlying governance architecture:

Every agency must still designate a Chief AI Officer and establish an AI Governance Board.

Agencies must still inventory AI use cases and publish public AI strategies.

"High-impact AI" — defined as systems whose output serves as a principal basis for decisions with legal, material, or significant effect on rights or safety — still requires minimum risk management practices, including:

- Pre-deployment testing

- AI impact assessments

- Ongoing monitoring

- Documentation and traceability

For vendors, this means any contract to deliver AI-enabled solutions to a federal agency still comes with documentation, transparency, and audit obligations — the requirements have been reframed, not removed.

The key shift: M-25-22 (procurement) now emphasizes maximizing use of U.S.-produced AI products and takes effect for solicitations issued after October 1, 2025. Contracts must include the ability to regularly monitor and evaluate AI system performance, risks, and effectiveness.

3. Military and defense contractors

Defense programs have had AI-related controls far longer than civilian ones. The Department of Defense (DoD) governs AI through a combination of policy, ethics directives, and procurement language. This sector has been the most stable through the administration change.

DoD Directive 3000.09 — Autonomy in weapon systems

Updated in January 2023, this directive remains in full effect. It requires "appropriate levels of human judgment over the use of force" and demands verification and validation (V&V), safety assurance, cybersecurity, and traceability for any autonomous system. Contractors building or integrating AI into weapons or command systems must provide evidence of these controls.

The FY2025 National Defense Authorization Act (Section 1066) now additionally requires the Secretary of Defense to submit annual reports to Congress on the approval and deployment of lethal autonomous weapon systems through December 31, 2029.

Responsible AI strategy and implementation pathway (RAI SIP)

Establishes governance, testing, data-quality, and workforce training across DoD programs. Flowed down through the Chief Digital and AI Office (CDAO) into solicitations. Contractors must maintain traceability of data and model decisions, demonstrate human-in-the-loop mechanisms, and comply with continuous monitoring expectations.

AI-specific clauses in procurement

Typical deliverables now include:

- Model documentation (training data lineage, evaluation methods, bias reports)

- Operational oversight plans (who reviews AI outputs, escalation process)

- Incident-response and security procedures

- Rights in data clauses ensuring DoD access to underlying code or data if needed for mission continuity

For smaller vendors, these clauses act as a de facto certification system: you cannot win or keep a DoD AI contract unless your internal controls match these expectations.

4. Automotive and mobility sector

The U.S. automotive industry is governed through safety law, not AI law — but AI is now integral to vehicle safety.

Federal layer: NHTSA oversight

The National Highway Traffic Safety Administration (NHTSA) enforces the Federal Motor Vehicle Safety Standards (FMVSS). Manufacturers must self-certify compliance with FMVSS before vehicles are sold.

The Standing General Order 2021-01 — originally issued in 2021 and most recently amended in April 2025 (third amendment, effective June 16, 2025) — requires mandatory crash/incident reporting for any vehicle using Automated Driving Systems (ADS) or Level 2 ADAS.

Under the 2025 amendment, reporting entities must notify NHTSA within five days of receiving notice of a crash involving a fatality, hospital-treated injury, vulnerable road user, airbag deployment, or vehicle tow-away. Reports must include sensor data, system logs, and preliminary cause assessments.

The SGO is currently scheduled to expire in April 2026. NHTSA has announced plans to issue a proposed rule to permanently codify the reporting requirements.

This updated SGO is part of the new Automated Vehicle (AV) Framework announced by Transportation Secretary Sean Duffy in April 2025, which aims to accelerate AV deployment while maintaining safety reporting.

State layer: California example

CA DMV requires testing permits, disengagement reports, and collision reports within 10 days. CPUC demands data on AV "stoppage" or public-safety interference incidents. California is also considering legislation (SB 572) to ensure state-level crash reporting continues even if the federal SGO is weakened or eliminated.

Industry and international influence

The SAE J3016 levels of automation define what counts as Level 0-5 and guide design expectations. Global OEMs align with UNECE WP.29 R155 (Cybersecurity Management System) even for U.S. models, driving supplier audits on patching, vulnerability management, and threat analysis (TARA).

In short: automotive AI compliance today is a hybrid of self-certification, mandatory incident reporting, and global OEM audits — not yet a formal government-issued "AI certificate," but every bit as binding in practice.

5. Self-assessment, audits, and third-party verification — how enforcement works

| Sector | Who verifies compliance | Typical mechanism | Notes |

|---|---|---|---|

| Federal civilian agencies | Internal compliance + potential Inspector General audit | Self-assessment with required documentation; agencies may audit vendors post-award. | OMB M-25-21 preserves minimum risk management practices for "high-impact AI." Agencies retain third-party evaluation authority. |

| Defense contractors | Contracting Officer + DoD program office (CDAO/RAI) | Hybrid: internal V&V + external evaluation when risk or sensitivity is high (similar to CMMC). | DoD encourages independent test labs for autonomous systems. Annual congressional reporting now required through 2029. |

| Automotive | NHTSA & state regulators | Self-certification to FMVSS + mandatory reporting under SGO 2021-01 (third amendment); OEMs conduct supplier audits. | Non-compliance leads to recalls, civil penalties, or permit loss. SGO codification rulemaking expected 2026. |

| State employment/consumer AI laws | Local regulators (e.g., NYC DCWP, state AGs) | Third-party bias audit required by law (NYC); AG enforcement under existing anti-discrimination statutes (NJ, others). | First legally mandated external AI audits in U.S. Enforcement ramping up after Comptroller audit findings. Federal preemption attempts underway but not yet effective. |

So, in the U.S. system:

- Low/medium-risk AI → self-assessment, record-keeping, internal QA.

- High-risk or contractually sensitive AI → external or third-party review.

- Safety-critical or rights-impacting AI → mandatory incident reporting or independent audit.

6. How this appears in real contract language

Federal and industry templates already embed AI-specific clauses:

Example 1 – Federal procurement (OMB M-25-22)

"The Contractor shall provide sufficient documentation of the AI system, including data sources, training methods, and evaluation results, to allow the Agency to assess compliance with applicable standards and to perform audits or independent verification as required."

Example 2 – Supplier audit clause (adapted)

"Supplier shall permit the Customer or its authorized third party to audit compliance with AI risk-management requirements, including review of model training data, evaluation records, and change logs."

Example 3 – AI disclosure clause (state government)

"Seller shall disclose any Products or Services that utilize Artificial Intelligence and shall not employ such functionality without written authorization of the State and implementation of agreed safeguards."

Example 4 – Automotive quality addendum

"Supplier shall maintain documentation demonstrating compliance with cybersecurity and AI-function validation requirements equivalent to UNECE R155 and provide access for customer audit upon request."

Such language bridges current voluntary frameworks (like NIST AI RMF) with contractually enforceable obligations.

7. What companies should do now

The compliance landscape is more complex than it was a year ago — not less. The federal government wants to simplify regulation, but until legislation passes, companies face obligations from multiple directions simultaneously.

Organizations developing or deploying AI in sensitive contexts should:

Adopt the NIST AI Risk Management Framework (AI RMF) to structure internal governance (Govern → Map → Measure → Manage). The NIST framework remains referenced across both administrations and is a safe foundation regardless of political shifts.

Implement ISO 42001 (AI Management System) for an auditable layer of governance — this is the bridge between voluntary and mandatory, and between U.S. and international requirements. With the EU AI Act's August 2025 general-purpose AI obligations already in effect and broader provisions coming in August 2026, ISO 42001 gives you a single framework that satisfies both sides of the Atlantic.

Maintain a "technical file": model cards, data-provenance maps, evaluation results, limitations, human-oversight logs, incident records. Whether your next audit comes from a state regulator, a federal contracting officer, or an OEM customer, this documentation is what they'll ask for.

Prepare for audits — even if they're not yet required. NYC's Comptroller audit revealed that enforcement is ramping up. California's ADMT rules kick in January 2027. Defense contracts already require it. When audits arrive, evidence needs to already exist.

Monitor both state and federal developments. The December 2025 executive order signals that some state laws may be challenged, but it doesn't eliminate them. Companies need to comply with current law while tracking which specific regulations the AI Litigation Task Force targets — and what courts decide.

Review contract terms: expect to see clauses on transparency, data access, human oversight, and independent evaluation in both federal and private-sector agreements.

8. Key takeaway

The US may not have a national AI certification law yet, but AI oversight is already real - and getting more complicated, not less:

- States are auditing bias and enforcing anti-discrimination law against AI tools.

- The federal government has replaced its AI executive orders but preserved core procurement and governance requirements.

- The DoD demands human oversight and traceability.

- Automakers must self-certify safety and report incidents under an updated (and soon-to-be-permanent) reporting framework.

- The White House is simultaneously trying to preempt state laws it considers "onerous" - but those laws remain enforceable until courts or Congress say otherwise.

Each domain uses its own mechanism - self-assessment, audit clause, or third-party verification - but they all point toward a single future: AI governance that is risk-based, documented, testable, and ready for external inspection.

The companies that will be best positioned aren't the ones betting on which laws survive — they're the ones building governance frameworks (like ISO 42001) that satisfy any regulator, regardless of jurisdiction.

I created this AI Compliance Decision Tree & Checklist to help you evaluate your own company - and your suppliers or vendors - for the AI governance steps required under current and upcoming laws.

The goal is simple: to give you a practical, risk-based pathway for identifying what rules apply, which standards you can adopt voluntarily, and how to prepare documentation before full enforcement begins.

By following this tree, you can determine:

- Whether your organization or suppliers are affected by the EU AI Act, U.S. state-level AI regulations, or sector-specific requirements (such as government contracting, defense, or automotive).

- What level of assessment applies — from internal self-assessment to independent third-party audits.

- Which frameworks (ISO 42001, NIST AI RMF) best support readiness for both European and U.S. markets.

This tool is designed as both a diagnostic map and a compliance register — enabling you to choose the right governance pathway, document your risk management practices, and stay ahead of emerging AI certification and audit requirements worldwide.

And here is the Global regulations overview in the text format of the AI compliance Decision Tree: